Why “AI” instead of “LLM”

I know. What I actually mean most of the time is Large Language Model (LLM). Transformer architecture. Probability distribution over tokens. Trained on absurd amounts of text scraped from every corner of the Internet, nook and crannies of Reddit, including that weird Minecraft forum you thought was dead in 2012. I kinda know the ideas.

I say “AI” because AI is the word people actually understand.

“I think it’s time to accept that ‘AI’ is good enough, and is already widely understood.” — Simon Willison

If you walk into a room of normal humans and say, “I built a tool using a fine-tuned LLM with retrieval-augmented generation and custom embeddings.” You’ll get polite nods, maybe a half-hearted “cool.” But no one knows or can understand yout verbal-diarrhoea. What about, “I built an AI that answers questions.” Instant attention. Phones come out. Aunties ask if it can do astrology. The marketing intern thinks you’re a wizard.

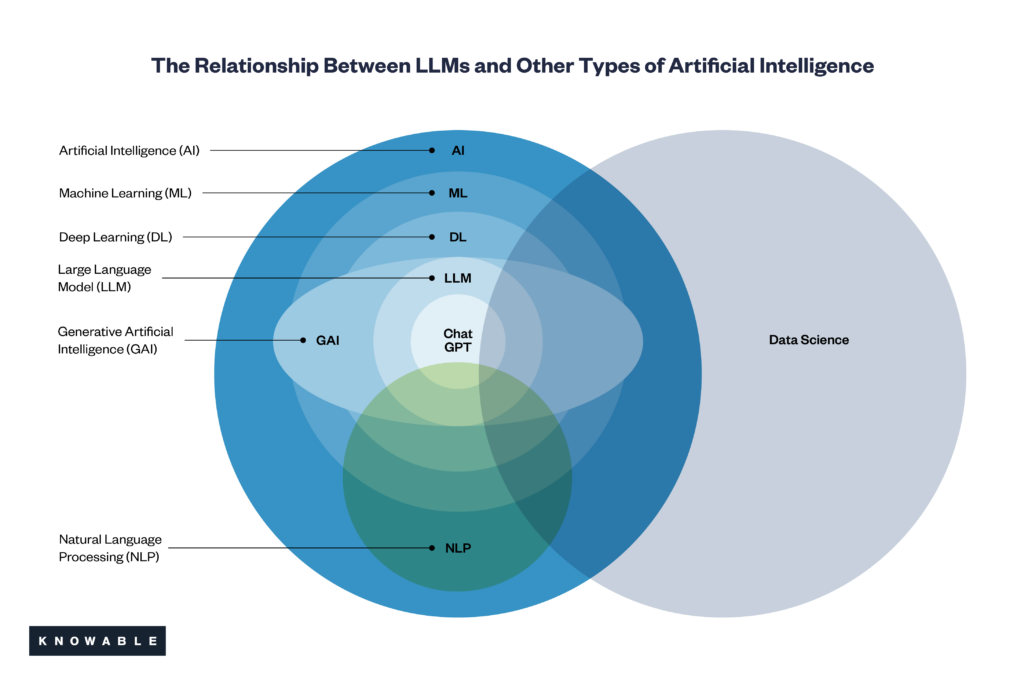

Yes, “AI” is imprecise. Technically, it combines computer vision, reinforcement learning, and whatever Elon Musk promises this week. But language is about utility, not pedantic correctness. When we say “AI,” the person across the table knows we mean “the thing that talks back like ChatGPT.”

Also, “LLM” is a terrible brand. It sounds like a boring accounting firm or a law school entrance exam. “AI” has cultural gravity. Movies, books, hype cycles, everything primed us to say “AI” and nod solemnly, even when it’s an autocomplete suggestion with better PR.

And in Startup land, perception is half the product. Nobody’s raising a seed round off a pitch deck titled “Fine-tuned Statistical Token Autocomplete System.” Investors want “AI.” Customers want “AI.” Journalists want “AI.” You can tattoo “transformer” on your arm if it makes you feel better, but the real world has already voted.

So yes, let’s get comfortable with “AI.” It’s wrong in the same way as calling your car a “vehicle” instead of a “four-stroke internal combustion machine with torque converter” is incorrect. Technically off, practically perfect.

And if someone wants to lecture me about how it’s not “AI,” it’s just an “LLM,” I’ll happily nod. Then I’ll go back to using “AI” because, ironically, the model of human communication suggests it predicts the right outcome with the highest probability.